AI implementation is no longer a technology problem. It’s a leadership problem.

Many organizations are talking about AI implementation as if it’s a tooling problem – do we subscribe to Chat GPT or Claude? How do we integrate our chosen solution to our existing software? Do we have a policy? Can we show off the increase in AI usage to the board, or to our shareholders?

The uncomfortable truth, however, is that AI seldom goes wrong because the technology fails. It goes wrong because leaders haven’t adapted their behaviours and thinking to the new operating environment in which AI is everywhere.

Recently we were talking about this at our leadership meeting. One theme kept recurring. AI is already firmly inside organizations – sometimes embedded in products, sometimes through enterprise-wide rollouts – and leaders feel increasingly behind the curve. This is not because they don’t have access to the right technology. It’s because they don’t have clarity about how to lead in an AI world.

What does this look like?

Shadow adoption is the default

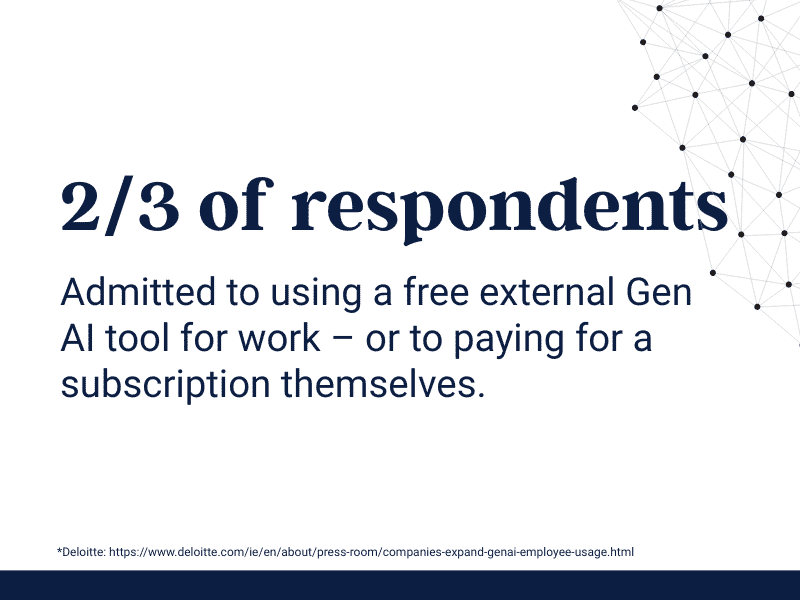

In a recent survey, nearly 2/3 of respondents¹ admitted to using a free external Gen AI tool for work – or to paying for a subscription themselves. Couple this with the fact that over 20% of organizations² don’t even have a Gen AI policy, and the outcome is an increasing risk of sensitive data being inappropriately uploaded and client (or internal) confidentiality being breached.

This isn’t a technical gap. It’s a leadership gap – where leaders don’t have a clear understanding of what responsible, sensible use looks like, teams will make use of what tools are available while trying to deliver better, faster and cheaper. Leaders then discover too late that next year’s budget has been uploaded to a public model, or that they’ve been making strategic decisions based on unverified AI input.

Governance on paper is not governance in practice

Even where governance does exist (and this has increased markedly in the last 12 months) it often takes the form of “we have a policy on the intranet”. Unfortunately, governance on paper does not equate to governance in practice. Having your CTO (or often your COO) draft a document and publish it internally does not magically create safe behaviour. If anything, a poorly thought-through policy can drive usage underground.

Like any other element of governance, success lies in real-world behaviour. How leaders talk about AI; how they role-model use of AI; what people feel safe admitting and how (if at all) AI-assisted outputs are checked are the real barometers. Ask yourself “how has our AI policy been brought to life, embedded and reinforced?” If you don’t have a complete answer to that question, you don’t have governance. You have paperwork.

Data quality can no longer be taken for granted

Gen AI has changed both how, and how quickly, information moves around organizations. When leaders used to ask their teams for information and recommendations, they were able to trust that the data collection, analysis and judgement put in front of them was the result of hours and days of human effort. Now they receive work produced quickly and not always reviewed thoroughly.

As one senior leader put it recently, they are drowning in an ever-increasing quantity of data whilst being increasingly unsure as to the quality of that data. For them, the new challenge is not “how do I get the information I need to make decisions” but rather “how can I trust the information I’m being given”.

Ensuring data quality is again a leadership issue. It needs leaders to know the answer to questions such as:

- What counts as good evidence?

- How do we distinguish between AI-informed, AI-assisted and AI-executed?

- How do we ensure that key judgements are still made by humans?

In environments where information is produced faster and decisions must be made more frequently, the organizations that succeed will not simply be those with access to AI. They will be those whose leaders can exercise consistent judgement under these conditions.

AI amplifies people, not just productivity

AI is not an objective, rational thinking partner. Rather, it is more like a fairground mirror – magnifying some elements of your thinking and lessening others. Vague prompts tend to create even vaguer answers, and narrow input leads to even narrower output. At the same time as work is speeding up, biases and human habits are being amplified.

This means that the old adage of “know your people” becomes truer than ever. Who thinks critically and is likely to have challenged AI’s grandiose or unlikely claims? Who defaults to speed of execution? Who values nuance and complexity, and who prefers simplicity? The answers to those questions need to significantly influence how you make use of AI-supported output.

Trust is even more vital than before

The existence of AI tools – particularly in environments where shadow adoption is rife – forces leaders and teams into new types of trust conversation. In open and healthy teams, people share where and how AI has helped. “Here’s what I did, here’s what Claude added.” Where that trust has not been built, the risk is that people hide AI use to appear more efficient, more competent, or simply move faster.

AI adoption is therefore absolutely linked to psychological safety and genuine accountability. Leaders need to create an environment where people feel safe to be transparent and share how and where AI has been used, and where they understand that the risks of unacknowledged AI involvement outweigh the potential embarrassment of admitting to using Chat GPT.

So what should leaders do?

Leaders therefore need to treat this not simply as a technology challenge, but as a leadership infrastructure problem.

- Decision discipline. Where can AI be used, and where can’t it? How do we document decisions

- Authority boundaries. What does “having a human in the loop” actually mean?

- Behavioural norms. A shared language that supports the distinctions between AI-supported and AI-created, and permission to be transparent about AI usage

- Capability building. Not in using the tools, but in leading the teams who use the tools.

AI will keep changing. Tools will change, prices will move, capabilities will increase (or decrease). What won’t change is the reality that the organizations that gain most from this change will be those whose leaders can operate in an environment where information is cheap, outputs are rapid and judgement is the differentiator.

At Inspirational Group we are increasingly exploring AI not simply as a technology adoption challenge, but as a leadership capability issue. In many organizations the real question is not whether AI is being used, but whether leaders have the judgement and decision discipline to operate effectively in AI-influenced environments.

¹ & ² : https://www.deloitte.com/ie/en/about/press-room/companies-expand-genai-employee-usage.html

You may also like...

Most leadership development programmes teach people what to think. Leadership simulation exercises put them in conditions where they have to think, decide and act. Under...

VIEW ARTICLEWhat does structured leadership development actually return? After 19 years of delivering FUSION, a year-long programme for HSBC, we have a considered answer. Drawn from...

VIEW ARTICLEMost organizations track activity during change. Few truly measure change progress. When reporting masks drift, leaders lose visibility until the cost is already embedded.

VIEW ARTICLE