Stop Asking. Start Listening.

Most feedback starts with good intent. The questions are sensible. The logic is sound. Someone needs feedback and to understand what people think. We are talking about post programme feedback, 360º development feedback, engagement surveys, and more.

What is much less clear, in practice, is whether the method actually delivers that understanding.

The standard approach is a survey. Likert scales. A few open text boxes. A thank-you screen. And then, weeks or months later, a report full of percentages that is hard to argue with and even harder to act on.

I’ve been in plenty of situations where that report is treated as insight. I understand why. Something was measured. Numbers were produced. It feels like evidence.

The harder question is whether it actually tells you what people experience. Or whether it simply tells you what people were willing to click.

The Format Is the Problem

Surveys don’t just collect opinions. They constrain them.

And over time, an entire industry has formed around that constraint. Specialists in question design. Benchmark databases. Norm tables. Proprietary scales. A whole architecture built on the assumption that the survey is the instrument, and that better surveys produce better truth.

What that industry rarely asks is whether the format itself is the problem.

We became expert at asking questions in a particular way, and at interpreting what the results apparently say. But in doing so, we quietly accepted a ceiling on what was knowable. We couldn’t look for patterns we hadn’t anticipated. We couldn’t surface hypotheses we hadn’t thought to test. We couldn’t follow a thread that a respondent introduced but a scale couldn’t hold. That kind of discovery requires a different structure altogether, one that intelligent avatars, for the first time, make possible at scale.

A Likert scale forces a number out of something that isn’t a number. A person might be broadly supportive of something, deeply frustrated by one specific part of it, and unclear about what happens next. The scale gives you a 3. The 3 tells you almost nothing useful.

The open text box is where the real answer usually lives. But by the time someone reaches it, they’ve clicked through twelve identical rows. They type something brief. Often they type nothing.

The data you receive is abundant. The intelligence is thin.

This isn’t a design flaw that better survey questions can fix. It’s a structural phenomenon. Surveys are built for confirmation, not for understanding. They’re good at telling you what proportion of people chose option B. They’re poor at telling you why, or what it would take to change that.

What Happens When You Actually Listen

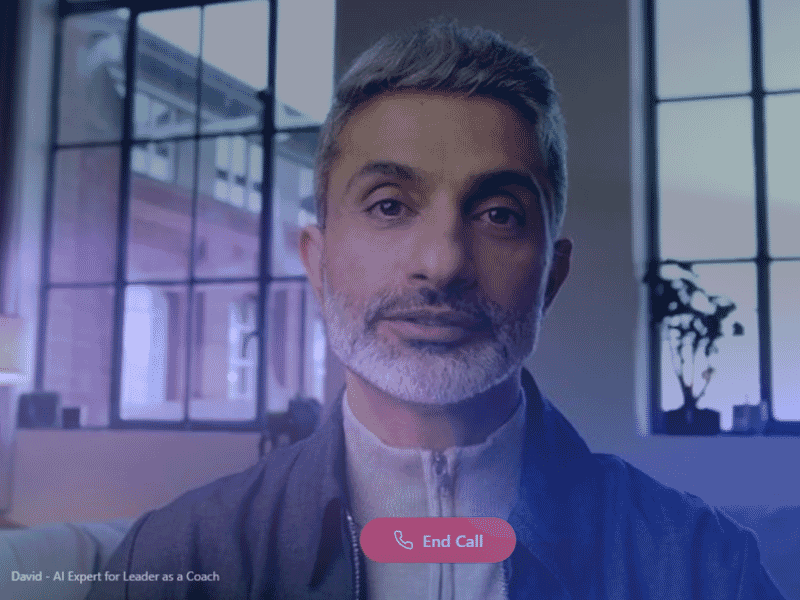

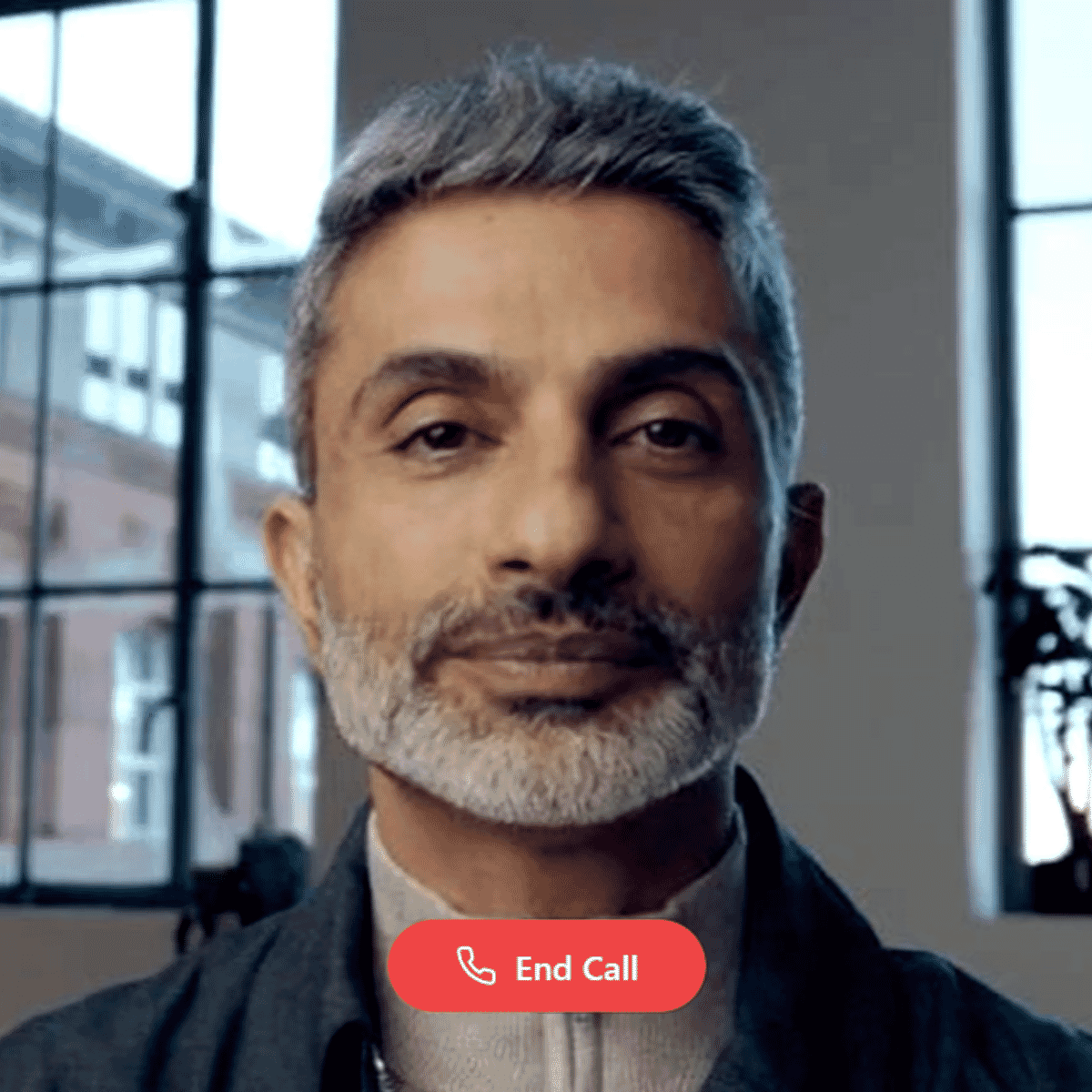

An AI-powered interview avatar is not a chatbot with a face. It’s a conversational research partner, one that can run at scale, available around the clock, and capable of adapting in real time to what someone actually says.

Here’s what the experience looks like in practice.

Someone is invited to share their view. They’re greeted by a presence, a face, a voice, a natural opening. They speak freely in their own words. The avatar listens. It follows up with behavioural questions: “Can you tell me about a time when you experienced that?” A survey can’t do that. An avatar can. Our avatars are trained to ask powerful questions, not coaching, but exploring hypothetically, focusing on exceptions, staying resource-oriented, and listening carefully to find the real reason behind the answer.

The conversation responds to the person, not to a predetermined script.

That matters because people are wired for conversation. We find it natural to explain ourselves when someone is listening. We’re more honest, more specific, and more forthcoming when we feel that our answer is being heard rather than processed.

Research backs this up. Conversational interfaces consistently produce longer responses, higher completion rates, and greater emotional openness than equivalent survey formats. Not because the technology is impressive. Because the human experience of being asked is different.

The Technology That Makes This Real

The shift from survey to avatar interview has been enabled by several things converging at once.

Large language models now underpin conversational AI that understands context, not just keywords. An avatar can be trained on your specific domain, whether that’s employee experience, customer feedback, public consultation, or clinical research, so the questions it asks, and the follow-ups it chooses, are genuinely relevant.

Realistic avatar rendering has reached a point where a digital face can convey attentiveness and warmth without tipping into the uncanny. Voice synthesis and speech recognition have become fluent enough that respondents can simply speak, which lowers barriers significantly, particularly for mobile users, older adults, or anyone for whom formal typing feels effortful.

Beneath the conversation, sentiment and emotion analysis can run in parallel. Not just capturing what was said, but the register in which it was said. Hesitation. Confidence. Frustration. These signals, aggregated across thousands of conversations, tell a different kind of story.

And because the avatar follows a research framework rather than a rigid script, every conversation is unique. Because every person is.

What You Actually Walk Away With

The shift from survey to avatar interview isn’t just a better experience for respondents. It’s a fundamentally different quality of intelligence for the people who need to act on it.

You stop choosing between scale and depth. An avatar can run thousands of nuanced conversations simultaneously. You get qualitative richness at quantitative volume.

You reduce the distortions that uniform scales introduce. Social desirability bias. Central tendency effects. Respondents clicking quickly to get it done. Conversational responses aren’t perfect, but they’re free from the particular distortions that come from forcing experience into a numeric grid.

You get themes and patterns surfacing in real time, not six weeks later in a slide deck.

And you reach people you were previously missing. Voice-first interaction, multilingual capability, and natural dialogue lower barriers for respondents who find formal written questionnaires alienating, which, if you think about it, is quite a lot of people.

The Question Worth Sitting With

The next time your organization is about to commission research or a feedback programme, it’s worth asking: what do we actually need to know?

And what kind of conversation would it take to find out?

If you need more than a percentage. If you need the language of experience, not the language of scales. If you need to understand why, not just what, if you need to surface patterns and correlations, and if you want to work with hypotheses and assumptions, then the checkbox isn’t the right tool.

The technology to have better conversations, at scale, exists today. Ask for a demo below.

Book a Demo

The Inspirational Group works with organizations to design and deploy AI-powered research and feedback solutions, including conversational interview avatars.

You may also like...

Around 1,100 delegates gathered in Edinburgh for the BSA Annual Conference 2026. The agenda covered AI, regulation and the future of the mutual sector. But...

VIEW ARTICLERecognised at the Learning Excellence Awards 2026, Haven’s General Managers’ Programme shows what happens when learning moves beyond the classroom and into real-world performance.

VIEW ARTICLEMost leadership development programmes teach people what to think. Leadership simulation exercises put them in conditions where they have to think, decide and act. Under...

VIEW ARTICLE